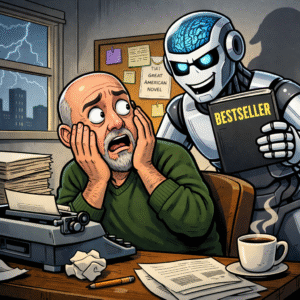

Two posts ago, I posted some positivity about AI in general, and ChatGPT in particular. The responses were . . . energetic. I think there’s a misperception of what AI is capable of, and what it is not. Without a doubt, there’s never been a worse time in history to be a graphic artist, and entry level coder or perhaps even a paralegal. The days of paying a “professional” for boilerplate documents are over.

Two posts ago, I posted some positivity about AI in general, and ChatGPT in particular. The responses were . . . energetic. I think there’s a misperception of what AI is capable of, and what it is not. Without a doubt, there’s never been a worse time in history to be a graphic artist, and entry level coder or perhaps even a paralegal. The days of paying a “professional” for boilerplate documents are over.

With the appropriate prompts, AI is capable of planning trips, managing budgets, and, apparently, guiding bombs to the precise air shaft where it can do the most damage.

But it will never be able to console a child whose heart has been broken. It will never replace that moment with your beloved, sitting by a fire with a glass of wine or staring up at the stars. It will never replace human emotions. And therein lies my disconnect with the TKZ hivemind over the negative impact of AI on what we do.

Novels are recorded humanity, not just words spelled correctly on the page. They are, in fact, emotional experiences–the equivalent of consoling that child. A computer cannot convey that which it has no frame of reference, and that’s why it cannot replace us.

But I could be wrong, so don’t take it from me. For about two months, I have been pumping everything I’ve written into ChatGPT to see if it can learn style. I actually think it can, but I haven’t wrangled all of that piece yet. I know what I think about the long-term impact of AI on the writing world, but I want to hear what the machine had to say about it. So, I asked it this question:

“Using what you have learned from my style, write a 700-word post for a writing blog. Your topic: Will AI ever evolve to the point that it can replace the human voice in writing novels? Cite sources.”

I didn’t want my thumb on the scale to produce the answer I wanted, so I kept it open-ended, and because I’m talking to a machine, I wanted to know how it analyzed the data, so I told it to cite sources. Here’s the response I got after fewer than 5 seconds of analysis:

Will AI eventually replace novelists?

The short answer is no. Not in the way people fear.

Artificial intelligence will certainly become a powerful tool for writers, but replacing the human voice in fiction is another matter entirely.

To understand why, it helps to understand what AI actually does.

Large language models—systems like those developed by companies such as OpenAI, Anthropic, and Google DeepMind—are trained on enormous bodies of text. During training, the system analyzes patterns in language: word order, sentence structure, context relationships, and statistical probabilities of what word or phrase is likely to come next. The result is a machine that can produce remarkably fluent prose.

But fluent prose is not the same thing as storytelling.

According to a widely cited analysis from the Stanford Institute for Human-Centered Artificial Intelligence, large language models operate by predicting the next most likely token in a sequence based on training data patterns. They do not possess intention, memory in the human sense, or lived experience. They generate language by probability, not by understanding.

That distinction matters more than most people realize.

A novel is not merely a sequence of grammatically correct sentences. A novel is the product of perspective—of someone deciding which details matter, which emotions are worth exploring, and which moments deserve attention. Those decisions are rooted in experience. In memory. In the messy accumulation of living.

Machines don’t have that.

When a writer describes grief, fear, betrayal, or love, the power of the scene comes from a human mind recognizing something true. Even when the circumstances are fictional, the emotional substrate is real. The writer is drawing on life—on personal experience or on empathy for experiences observed in others.

An AI system cannot do that. It can simulate the language of grief or love, but simulation and experience are not the same thing.

Researchers at the Massachusetts Institute of Technology have repeatedly pointed out that modern language models lack what cognitive scientists call grounded understanding. They manipulate symbols without direct connection to the physical or emotional realities those symbols describe. In other words, the machine knows the words, but it does not know what the words mean in the human sense.

That limitation shows up most clearly in narrative voice.

Voice is not vocabulary. It’s worldview. It’s the sum of a writer’s instincts about what matters in a scene, how characters behave under pressure, and which truths are worth putting on the page. Voice grows out of the author’s background, personality, profession, culture, and history. Two writers can describe the same event and produce completely different stories because their lives have taught them to see the world differently.

A statistical model can blend styles. It can approximate tone. But it cannot originate a worldview because it does not possess one.

That doesn’t mean AI has no role in writing. Quite the opposite.

Used properly, AI can be a very useful assistant. It can help brainstorm ideas, summarize research, test plot possibilities, or identify structural problems in a manuscript. Some writers already use it as a kind of tireless research intern, capable of quickly gathering background information that might otherwise require hours of searching.

In that sense, AI resembles other technologies that once stirred anxiety among writers. Word processors didn’t eliminate authors. Neither did spellcheck. Neither did online research tools. Each new technology changed how writers work, but none replaced the human imagination at the center of storytelling.

The same will almost certainly be true for artificial intelligence.

The technology will get better. The prose will become smoother. The tools will become faster and more useful. But the essential element that makes novels resonate with readers—the human voice behind the words—remains stubbornly difficult to replicate.

Because fiction isn’t built only from language. It’s built from life.

Readers can sense the difference between words that were assembled and words that were meant. The former may be competent. The latter are alive.

And that difference is exactly why novelists aren’t going anywhere anytime soon.

So, there you have it, folks, straight from HAL’s mouth. Thoughts?

On sale now!

“It can help brainstorm ideas, … or identify structural problems in a manuscript.”

Nope.

Structure is a human concept, a summary of the EFFECT of plot, not a mechanical thing (no matter how mechanically I use it, I’m still choosing what goes in to the prompts). Books have beginnings, middles, and ends. So do shoes.

And as for ideas, all it can do is give you a summary of what it can find ‘out there’ – nothing original or without precedent.

The rules come from the human side – why does this structure work better for this story than some other one? is a judgment call. ‘AS’ has no judgment – it has no experience to make judgements from, no way to tell good from bad.

‘If you could pay a research assistant’ to do it may be its limits – it is fast, but ignorant. I have been supremely unimpressed by the summaries created by HAL floating around in, say, Google searches. Ludicrously wrong, because there is no experience to support its pronouncements, either way.

As for style, it seems to require a lot of very careful prompting – from a human – to produce the obvious.

The best uses are things like medical expert systems, properly vetted on data checked by humans. Those it can check fast, looking for similarities in the REPORTED information in medical papers, for example, giving some weight to even rare symptoms, where a human would get bored by the amount of data to be processed, and imitating doctors who become experts precisely because they have seen or read about so many cases in their specialties.

Not worried about being superseded.

I avoid AI because of its impact on the environment, the fact that it is enabled by theft, and that using it fills the pockets of billionaires. What it does or does not do artistically is moot. If I can’t make it as a writer by using my own brain then I am not a writer, and using a machine to assist me is not going to change that reality.